Cross-layer Learning

Kasia enters the grocery store with a swipe, using her smartphone. She’s a woman on a mission following a clearly defined shopping list: apples, avocado, bread, mozzarella cheese, soup and maybe that delicious ready-made quinoa salad. As she readily hand-picks the packages from the shelves to a raffia bag, Kasia resembles a maestro, fiercely conducting a perishables orchestra. Sounds familiar? This is a three-way symphony: entering the store, getting the products and exiting the premises. No money, no lines. Cashless and cashier-less. What once seemed to be a visionary essay about the future of retail is becoming a reality, driven by a new urban slash millennial slash 21st century customer, relentlessly seeking improved in-store experiences.

Deep learning is one of the key technologies behind the current “AI (Artificial Inteligence) revolution”, increasingly powering the interactions Kasia will experience when visiting her local store. Deep learning uses Neural Networks (NNs), which have been around for a while – going back to the 60’s when Frank Rosenblatt introduced the Perceptron artificial neuron. Since then many advances in computational capabilities have made it possible to link up artificial neurons into many different layers, with each layer successively processing outputs from the previous layer and providing the inputs to downstream neurons, generating what we’ve come to know as Deep Neural Networks.

Confused? For now just consider that a Deep Neural network is capable of processing the inputs fed into it, transform those in a way enabling it to learn how to carry out a certain task, provided enough data is available for it to be trained with a high degree of success. Given that deep learning in general is data hungry and that retail is data-rich – to the tune of circa 1.4 billion shopping baskets at our stores per year- we have a solid match, in that this technology enables us to tackle many of the challenges posed by new types of data being collected at scale across several dimensions of the store: transactional data, loyalty data, video and image data, amongst others.

One area that holds great promise is Computer Vision. If you think of Kasia picking the products to her raffia bag with no need to scan them, Computer Vision – powered by deep learning – is the technology that allows the trustworthy recognition of products and brands carried out of the store by Kasia. Amazon Go is a fairly well known example of a newer test format using this type of technology, and its promise of “frictionless shopping” is being made possible by the heavy use of advanced Computer Vision and Deep Learning algorithms.

Furthermore, if we can train Deep Learning algorithms to recognize and identify products at scale based on images and/or footage, we can broaden out the use cases. One example that is getting attention is Out Of Stock (OOS) identification. With the right video infrastructure, we can start collecting regular data from our shelves, and train Deep Neural Networks to identify situations in which the product is missing from the shelf – a so called OOS.

Many retailers are currently embarking on pilots with these technologies, often debuting with Computer Vision applications. One such example is the recently announced launch of Walmart’s Intelligent Retail Lab (IRL) in New York. Although many of these applications are still in “pilot” mode, initial results certainly look promising. Therefore it’s no surprise that many retailers are investing in greater machine learning (ML) and AI capabilities, both hardware (infrastructure), people and software (tools).

READY TO GO DEEPER?

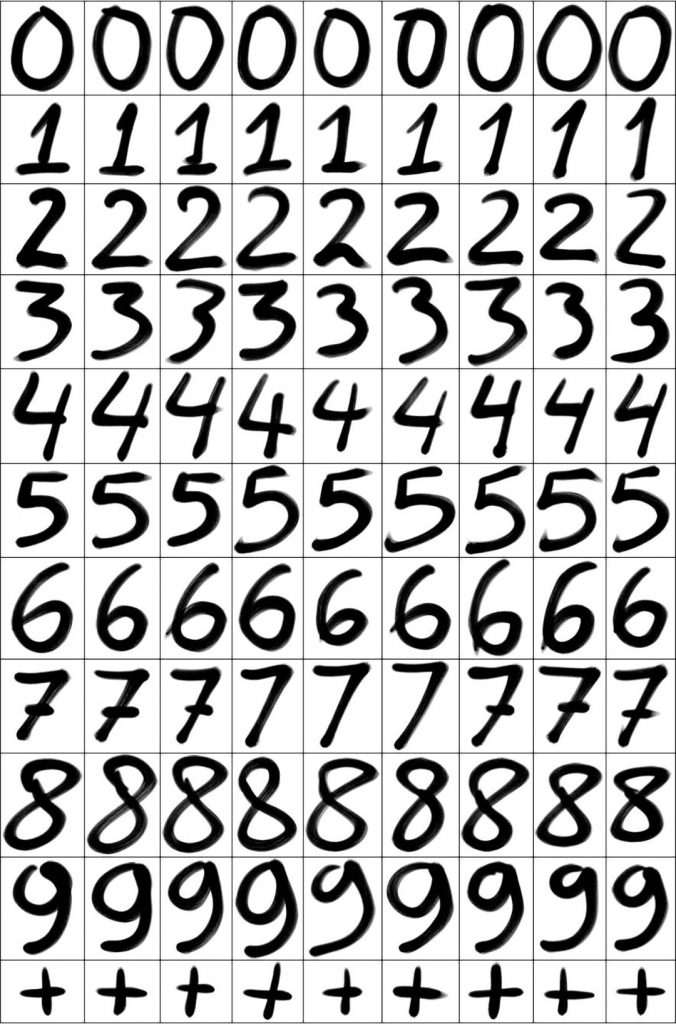

What do we mean when we talk about “Learning”? This is the process through which a network learns how to carry out a specific task, for example recognizing a Stop Sign at an intersection in the case of a camera-equipped self-driving car or learning to recognize digits handwritten by different people. The latter is a commonly used task for benchmarking Deep learning approaches, using a widely known dataset – the MNIST dataset, as the so called “training” dataset. Training data typically refers to the data we feed a network so that it can learn how to carry out a specific task.

Some examples of the MNIST dataset – a dataset that is often used as a benchmark for deep learning projects. This dataset contains 10 million different handwritten digits and their corresponding labels.

Armed with millions of examples of different observations (in the case of the MNIST dataset different digits handwritten by different people) and their corresponding labels – for example, that a certain handwritten digit actually corresponds to an “8”, the deep learning network will learn how to classify digits that it hasn’t “seen” before – i.e new handwritten digits – based on learning carried out with the originally labeled data. Example of a learning task – recognizing handwritten digits (in this case, an “8”) taking as input the pixel values of the image and providing as the output of the Neural Network a final class label.

The above example of image classification – teaching a Deep Neural Network to recognize handwritten digits – is an example of a Supervised Learning problem, and modern Deep Neural Network architectures excel at these types of problems provided enough data is available. In the simplest case, the training process of a network yields a set of “weights”, which are parameters that each individual neuron uses to either augment or diminish the signal it receives, before feeding the output of a simple calculation to downstream neurons. The final set of learned weights (in some cases, millions of parameters) provides us with what we call the final trained Deep Neural Network.

SUGGESTED READING

Despite not being able to cover the ins & outs of the training process in this article, the key point to note is that after such a training process is carried out our network of neurons will have learned the weights to apply to each input (such as pixels from an image) to carry out a specific task. The fact that deep learning is so data hungry, often requiring millions of data points for successful training, is usually highlighted as one of its main weaknesses. After all, humans do not need to be hit millions of times by a car to learn that it’s not a positive action. Hence, a currently very active area of research is transfer learning – a technique in which models trained for a specific task are re-deployed on a second related, often adjacent task, in this way leveraging the past training already carried out, rather than having to start the process from scratch.

Deep Learning Illustrated: A Visual, Interactive Guide to Artificial Intelligence (2019)

A visual, intuitive, and accessible, and yet comprehensive introduction to the discipline’s techniques and applications.